Master DevOps & Cloud

with Real-World Demos

21 hands-on courses on AWS, Azure, GCP, Kubernetes, Terraform & Docker. Learn by building real infrastructure, not watching slides.

What I Teach

Multi-cloud expertise across the technologies that matter most. Every course includes step-by-step demos and companion GitHub repos.

Terraform (Multi-Cloud)

7 courses covering HashiCorp certification, real-world IaC on AWS, Azure & GKE. My primary expertise with the #1 IaC tool.

Kubernetes (EKS/AKS/GKE)

5 courses on managed Kubernetes across all 3 major clouds. Including Helm, AGIC Ingress, and production architectures.

AWS Services

Fargate, CloudFormation, Elastic Beanstalk, CodePipeline, VPC Transit Gateway, and more. Deep AWS expertise.

DevOps & Docker

Real-world DevOps project implementation on AWS. Docker fundamentals to production with 40+ practical demos.

GCP Certification

Google Associate Cloud Engineer certification prep with 150 practical demos. Complete hands-on learning path.

MLOps & AI

Infrastructure to Intelligence. MLOps on AWS, Azure & GCP. AI certification courses coming in 2026.

Why Engineers Choose StackSimplify

Not theory. Not slides. Real infrastructure you build with your own hands.

100% Hands-On Demos

Every course is built around real-world practical demos. You build actual infrastructure, not watch PowerPoint presentations.

GitHub Repos for Every Course

57 public repositories with step-by-step documentation. Fork, follow along, and have working code from day one.

Multi-Cloud Coverage

AWS, Azure, and GCP in a single curriculum. Learn cloud-agnostic patterns and platform-specific implementations side by side.

What Students Say

Real reviews from engineers who learned DevOps, Terraform & Kubernetes with StackSimplify courses.

"This isn't just another Kubernetes tutorial. It's a production-grade, automation-rich, cloud-native implementation that mirrors what top tech companies deploy in real environments."

"Excellent content and well articulated workshops designed to pass not only Terraform certification but also gives practical exposure to Infrastructure as Code. Keep it up. Thank you!"

"There are no words to describe my excitement for taking this course. It seems absolutely amazing!"

"Each and every concept explained clearly and easy manner, with steps in the GitHub repo and slides explaining everything. HIGHLY RECOMMENDED!!!"

"An incredibly well-organized and practical course that mirrors real-world application perfectly. I highly recommend it!"

"A very well-explained course, highly recommended for anyone looking to get into DevOps and understand how things work in real-world production environments."

Weekly DevOps & Cloud insights from a 383K+ Udemy instructor

Get Terraform tips, Kubernetes troubleshooting guides, cost optimization strategies, and early access to new courses. Join for free.

Hi, I'm Kalyan Reddy Daida

DevOps & SRE Architect with 18+ years of experience designing complex cloud infrastructure. I've helped 383,000+ engineers worldwide master DevOps through practical, real-world courses.

I believe in learning by doing. Every one of my 21 courses comes with a companion GitHub repository so you can follow along step-by-step. My mission is simple: take the complexity out of cloud infrastructure and make it accessible to everyone.

Latest from the Blog

DevOps insights, tutorials, and cloud tips

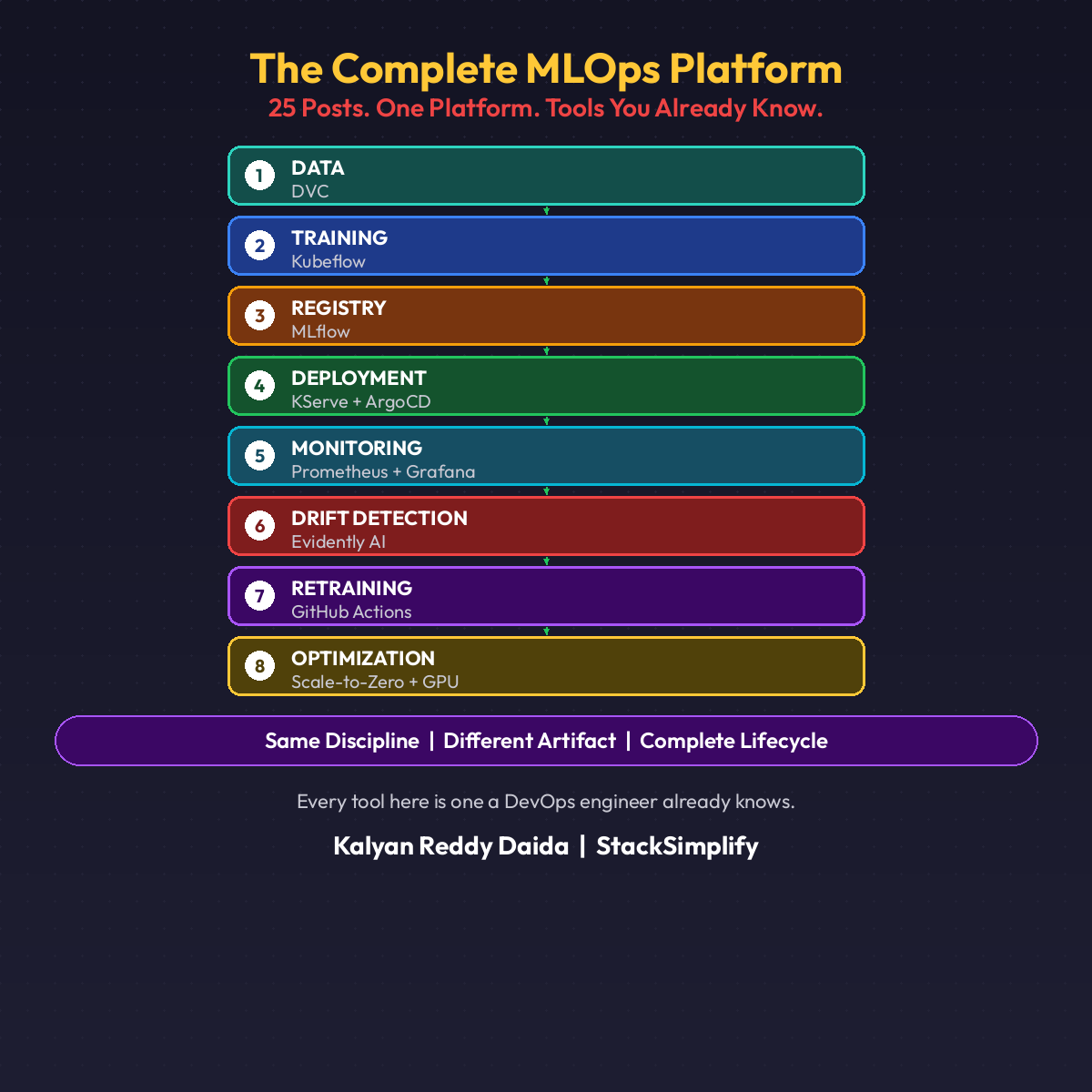

The Complete MLOps Platform: 25 Posts, 8 Layers, One Architecture

25 posts. One platform. Every tool a DevOps engineer already knows.

When this series started in February, MLOps felt like a separate discipline. Specialized tools. Unfamiliar workflows. A whole new vocabulary that seemed disconnected from everything you already knew.

25 posts later, here is what actually happened: every single pattern mapped back to something you have been doing for years.

The Complete Architecture

Eight layers. Each solves a specific production problem.

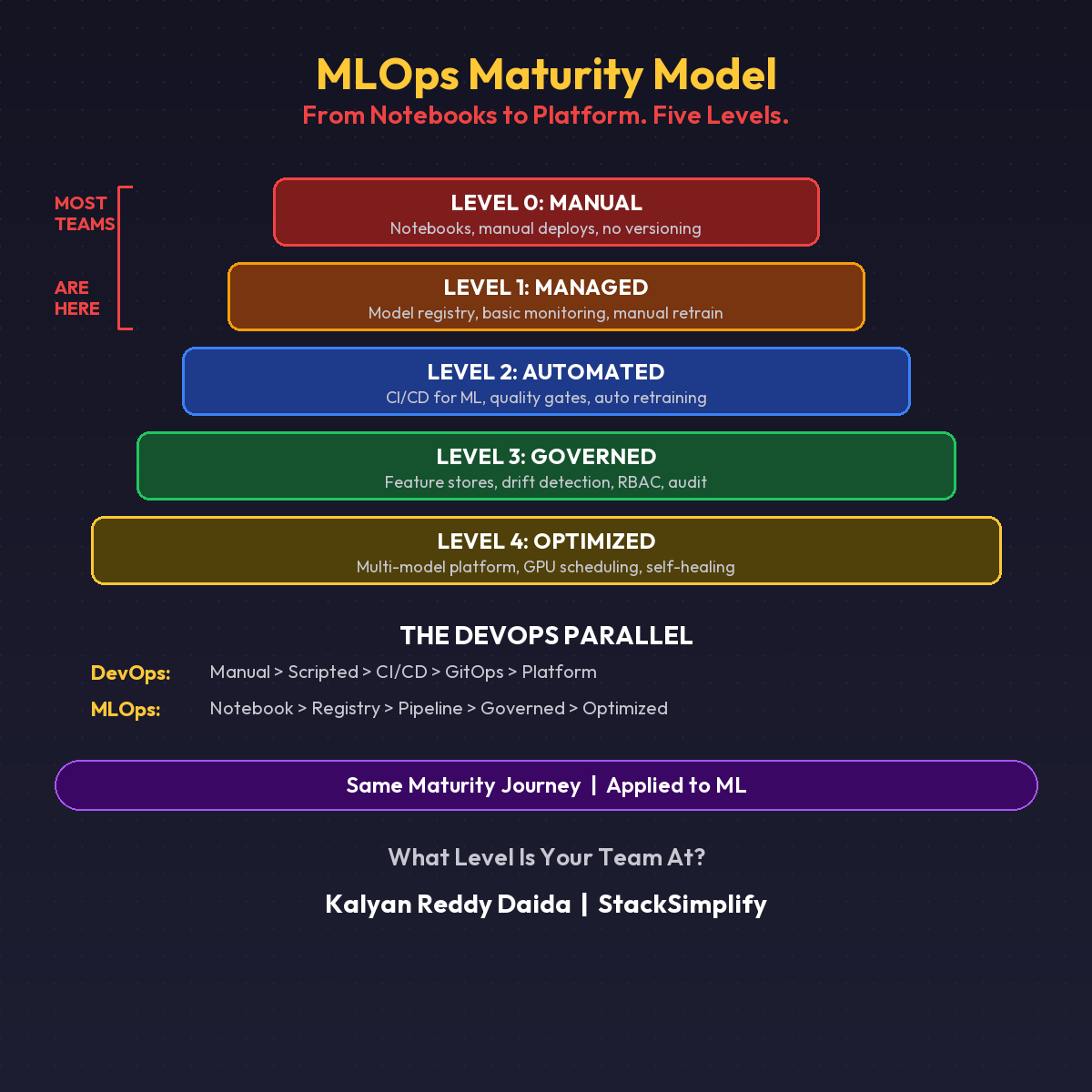

MLOps Maturity Model: From Notebooks to Platform in 5 Levels

Level 0: Jupyter notebook in production. Level 4: Fully automated ML lifecycle.

Most teams think they are somewhere in the middle. Most teams are wrong.

Here is the MLOps Maturity Model. Five levels, from chaos to platform.

The Five Levels

| Level | Name | What It Looks Like |

|---|---|---|

| 0 | Manual | Notebooks copied to prod. No versioning. Single person dependency. |

| 1 | Managed | Model registry, basic monitoring, manual retraining with a process. |

| 2 | Automated | CI/CD pipelines, automated retraining triggers, quality gates. |

| 3 | Governed | Feature stores, A/B testing, drift-triggered retraining, RBAC, audit trails. |

| 4 | Optimized | Multi-model platform, GPU scheduling, cost optimization, self-healing. |

Level 0: Manual

Notebooks copied to production servers. Models deployed by the person who trained them. No versioning. No monitoring. No rollback plan.

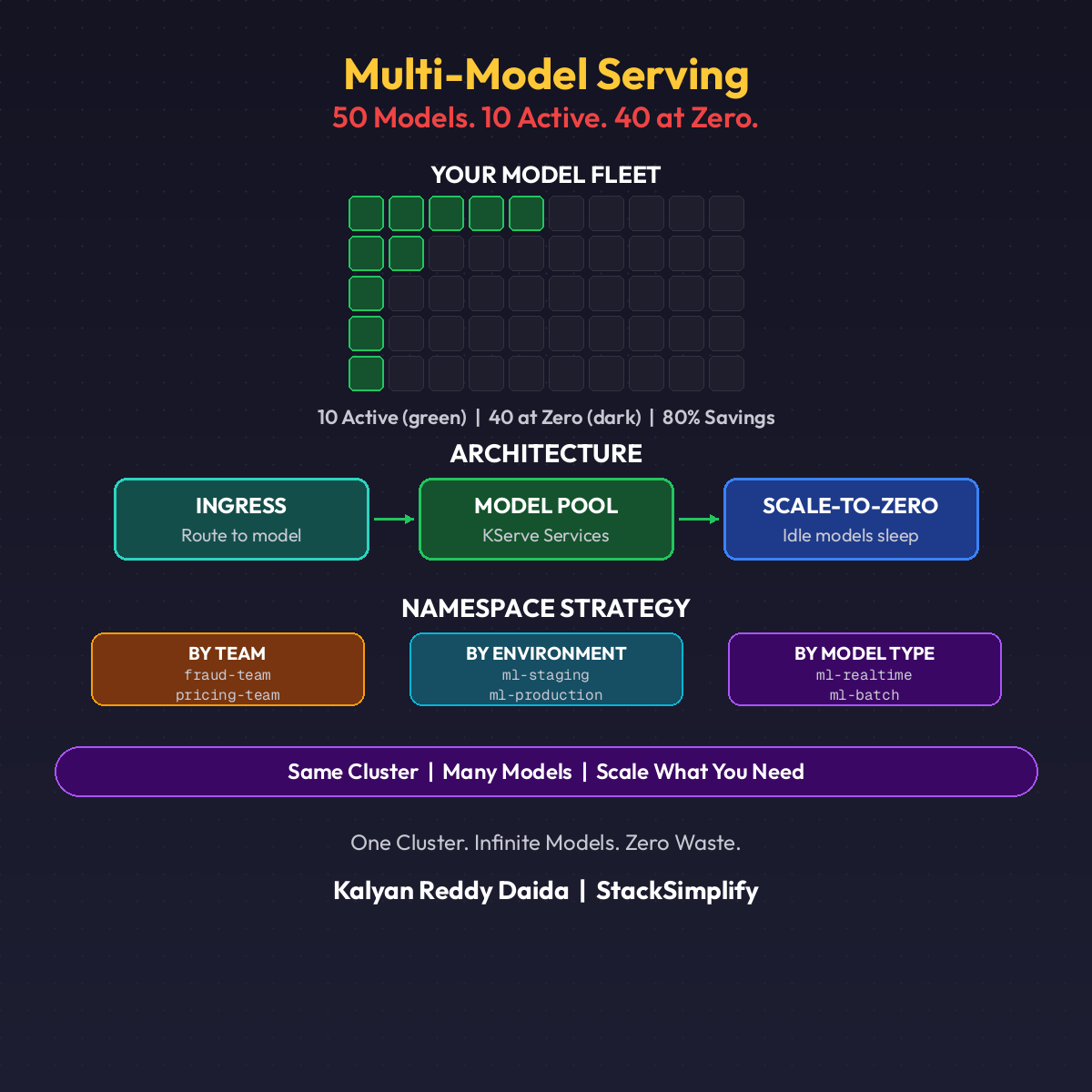

Multi-Model Serving on Kubernetes: 50 Models, One Cluster

50 models. 10 active. 40 at zero. One cluster.

That is the reality of a mature ML platform. Not one model per team. Not one namespace per endpoint. Dozens of models sharing infrastructure, scaling independently, and costing almost nothing when idle.

Most teams never get here. They get stuck at the single-model trap.

The Single-Model Trap

Team A deploys their fraud model. Gets its own namespace, its own Istio gateway, its own monitoring stack. Works great.

Ready to Level Up Your Cloud Skills?

Join 383,000+ engineers who are building real-world cloud infrastructure with StackSimplify courses.